How We Doubled a Public Tech Company’s Organic Traffic in Four Months

After eighteen months of flat performance, a structured AI workflow more than doubled our client's organic traffic in four months. Here's how we built it.

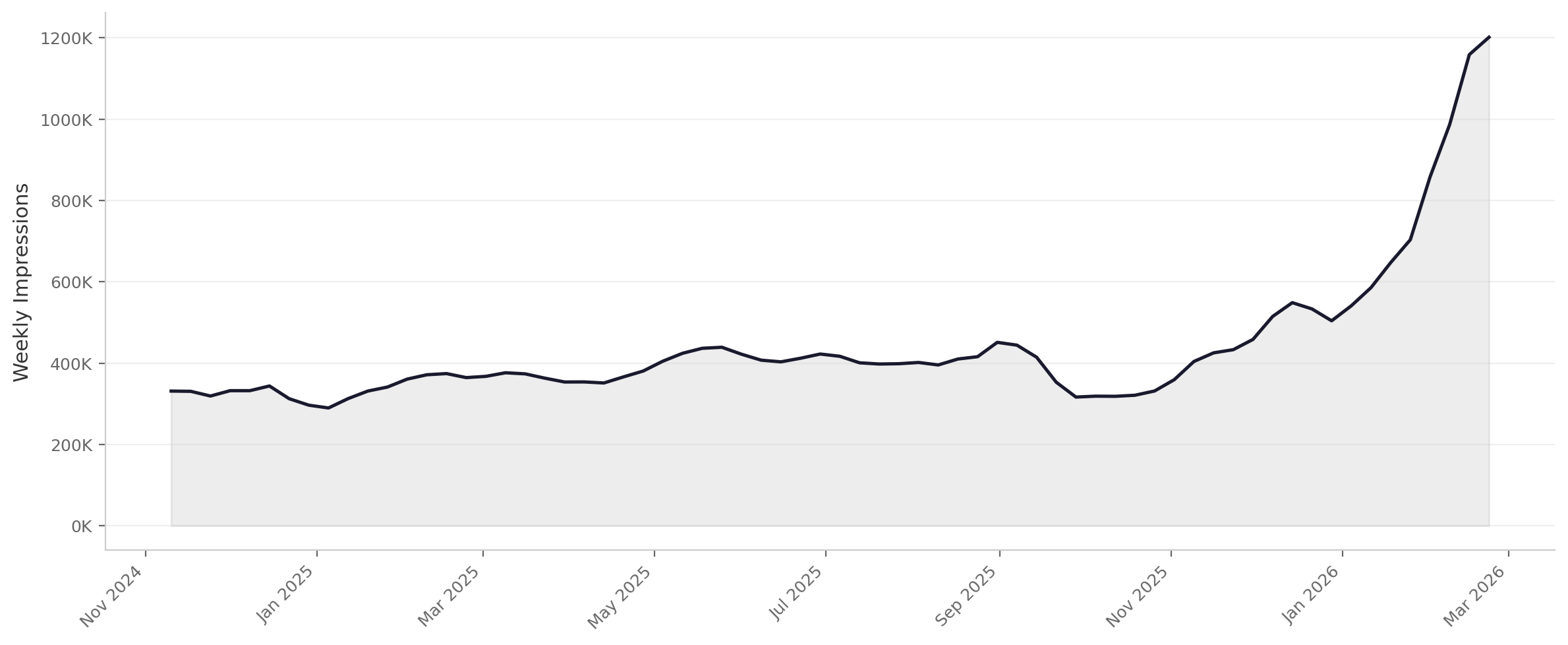

Website impressions, weekly, from Nov 2024 - March 2026

Our client, a publicly traded technology company, needed a system that could produce accurate, on-brand content for highly technical products at a pace that a traditional content process couldn’t match. To do this, we spent a couple months, starting in October 2025, building the right scaffolding first and then letting AI work inside it. And once we did that, the results were remarkable: our client went from around ~370,000 impressions a week in November 2024 to over a million in February 2026.

The company in question sells complex, highly technical products and services. Their website reflected that complexity in the wrong way: pages were long, wordy, and inconsistent. Product descriptions read like spec sheets; service pages seemed like lists of nearly indistinguishable nouns. The site wasn’t attracting organic traffic — it had been flat for over 18 months — and when visitors did arrive, the pages weren’t structured to convert them. Dozens of pages needed new content and new design, from top-level pages down through individual product and service pages. And we wanted to move fast and show results early.

We designed the system with three foundational resources in mind:

Messaging specs. The client’s marketing department provided a detailed messaging specification document for each product, service, and industry vertical. These were structured factsheets containing elevator pitches, key claims, audience profiles, and competitive positioning. They represented the client’s own carefully vetted language about what their products do, who they serve, and why it matters. For our workflow, they served as the single source of truth: the AI could draw on any claim in these documents and was heavily discouraged from reaching beyond them.

Strict page templates. We developed a standardized template for each page type with aggressive character limits. The templates constrained and standardized the outputs substantially.

A standards and style guide. By analyzing the full set of messaging specifications, we distilled the client’s brand voice into a set of explicit guidelines — tone, vocabulary, sentence structure, what to emphasize and what to avoid. We also codified the overall goals of the project and, critically, provided specific instructions for how to use the other resources. For example, the guidelines required the workflow to be as concrete as possible while drawing only on claims that appeared in the messaging specs. This ensured that the three resources worked as an integrated system, not just a collection of reference documents.

We loaded all three resources into a Claude project where they functioned as persistent context for every page we generated. This meant the AI wasn’t working from a blank slate or a single prompt.

The generation workflow itself was deliberately iterative:

1. Generate a first draft from the relevant messaging specification, strictly following the page template and voice guide.

2. Review the draft against the standards document, checking for structural compliance and tonal consistency.

3. Make required edits.

4. Conduct a final review focused specifically on empirical claims — verifying that nothing in the draft stated or implied anything that couldn’t be traced back to the source material.

5. Finally, feed the approved and published pages into the project knowledge to serve as examples of success.

The AI did much of the work inside a tight, well-governed framework. The scaffolding is what made the speed possible without sacrificing accuracy.

Once everyone was comfortable with the workflow, we started turning around large batches of pages — five to six major overhauls, each with new design and new content — inside of two weeks.

After more than eighteen months of flat organic performance, the site’s organic traffic began climbing within two months of the first pages going live. Within four months, it had doubled.

There’s a growing body of applied research — including from Anthropic’s engineering team — about how the quality of AI output depends less on the model itself and more on the quality of the context you give it. The best results come not from more sophisticated prompting, but from structured, high-signal information: grounding documents that define what’s true, constraints that define what good looks like, and clear guidance on how to make decisions.

That matched our experience exactly. We couldn’t write individual prompts for dozens of technical product pages and expect consistent quality. And even if we could, at that point we might as well just write the pages by hand. So instead, we built a system: source-of-truth documents that defined what could be said, structural templates that defined how to say it, and a standards guide that tied the pieces together. The AI operated within those parameters. The output was reliable because the parameters were right.

This approach isn’t limited to writing copy. The same principle applies anywhere an organization needs AI to produce accurate, consistent work at scale — code, technical documentation, regulatory filings, internal communications. The core time investment should be in the scaffolding: the factbooks, the standards, the review checkpoints.

Want results like these? Contact us here and let’s talk about your project.